Artificial life, AL, is a fairly new science and a first attempt to define the area involves parallels with artificial intelligence. As AI relates to intelligence, so artificial life will relate to life itself. Like the AI researchers, investigators in artificial life are attempting to create this phenomenon in a medium other than the original and natural. A virtual medium wherein the essence of life may be abstracted from all details of its implementation is the computer. But where AI begins with the brain and works down, AL begins with the “boots” and pushes up.

The promotion of artificial life has many different stimuli. One of its gurus, Chris Langton, says: ‘We would like to build models that are so lifelike that they cease to become models of life and become examples of life themselves.’ A less spectacular motivation is to view AL as a generator of insight into the understanding of natural life. And, as always, when it comes to new and challenging areas, the old technological imperative lurks in the background: if it is possible to realize something, then do it.

The accumulated views on AL have crystallized into two main schools: weak artificial life and strong artificial life. The weak artificial life school wants to simulate the mechanisms of natural biology. The strong artificial life proponents strive for the creation of living creatures, a process sometimes called computational ethology.

Preoccupation with AL gives rise to several philosophical questions and also to some problematic definitions. A definition of life given in this book involves the use of autopoiesis. Suppose that a machine extracts energy from the environment, grows, reproduces and repairs an injury to its body. Is this machine living or not? Alternatively, is something missing in the definition? Complexity, self-organization, emergent behaviour and adaptive responses are attributes inherent to all living creatures and are attributes hitherto only occurring in the bodies of biological creatures. Anyhow, life is a process and it is the form of this process, not the matter, that is the essence of life. Many researchers state that the logic governing this process is what counts, not the physical medium where it resides. Life is characterized by what it is doing rather than by what it is made of. Apparently, the secret of life lies not with the chemical ingredients as such, but with the logical structure and organizational arrangement of the molecules. But life is not located in the property of any single molecule. It is a collective property of systems of interacting molecules.

Of all concepts to define life, complexity seems to have a key role. In living systems, the whole is always more than the sum of its parts. Living systems are tremendously complex and so many variables are at work that the overall behaviour may only be understood as the emergent consequences or the holism of all interactions.

Now, let us assume that all of the qualities listed above exist within a very advanced computer program. If human beings then watch on a monitor how artificial organisms grow, eventually mutate, reproduce and struggle for survival, is this life which is being observed and if so, does it reside within a body or not?

To solve this problem the Duck Test — a kind of Turing test — has been proposed by some AL researchers. This witty idea can be traced to the work of the French engineer, Jacques de Vaucanson, who during the 18th century constructed an extremely realistic mechanical duck. ‘If it looks like a duck and behaves like a duck, it belongs to the category of ducks.’ In other words, if an artificial organism gives a perfect imitation of a living being and cheats an observer, it is living, no matter what it is constructed of. The core of life is then to be found in its logical organization, not in the material wherein it resides.

Most biologists agree in that the sole purpose of life is living, remaining and active; something which inevitably must bring about various kinds of self-interest. Therefore, to build a machine imitating life requires a construction oriented solely towards the maintenance of its own physical frame where life resides. Such a machine should not allow disconnection of its own electrical power and should react like the computer HAL in the film A Space Odyssey —2001. Perhaps the HAL test would satisfy the needs of the computer ethologist.

AL seems to have started with something that appeared to be quite simple but developed into something very complicated — a complexity that was sometimes impossible to distinguish from what appears to be random. In 1968, the English mathematician Horton Conway working at the University of Cambridge, invented a self- developing computer game called the Game of Life. A deterministic set of rules served as the laws of physics, and the internal computer clock determined the elapse of time when the game started.

Designed as a kind of cellular automata, the screen was divided into cells whose states were determined by the states of their neighbours. The set of rules decided what happened when small squares inhabiting the cells were moved, thereby triggering a cascade of changes throughout the system. According to their moves, the squares could die (and disappear from the screen) or remain as survivors arranging themselves with their neighbours into certain configurations. New squares could also be born and placed on the screen. From Conway’s description we read the following:

- Life occurs on a virtual checkerboard. The squares are called cells. They are in one of two states: alive or dead. Each cell has eight possible neighbours, the cells which touch its sides and its corners.

- If a cell on the checkerboard is alive, it will survive in the next time step (or generation) if there are either two or three neighbours also alive. It will die of overcrowding if there are more than three live neighbours, and it will die of exposure if there are fewer than two.

- If a cell on the checkerboard is dead, it will remain dead in the next generation unless exactly three of its eight neighbours are alive. In that case, the cell will be ‘born’ in the next generation.

What happened when the game started was that the game played itself, determined by the overall rules. Most of the simple initial configurations settled into stable patterns. Others settled into periodical configurations while some acquired very complex biographies. One of the most interesting discoveries was the ‘glider’, a five cell object that shifted its body with each generation, always moving in the same direction over the screen. It was an oscillating, moving system without physical mass, apparently some kind of evolving artificial life adapting itself and reproducing.

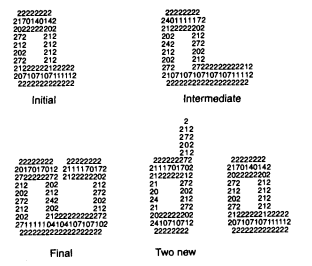

Later, Conway proved that the game was unpredictable; no one could determine whether its patterns were endlessly varying or repeating themselves. Embedded in its logical structure was a capacity to generate unlimited complexity; a complexity of the same kind as found in real biological organisms. The emergence of a stable pattern in the game is shown in Figure 7.5.

Figure 7.5 The emergence of a stable pattern in the Game of Life.

A common view among the AL researchers emerged that life in general was the result of a certain critical measure of complexity. When this level was reached, objects might self-reproduce quite open-endedly and thereby start a process of further complication. This emerging process was entirely self-organizing and apparently against all odds. But it happened over and over again as if life was inevitable, propagating over time.

For some researchers a corollary of this phenomenon was that the building material of the Universe was pure information. The ongoing creation and expansion of the Universe was an ultimate cosmic simulation and the computer was the Universe itself. Life’s programming language was four-based instead of binary, built on the four basic chemicals creating the genetic code. If Nature seems unpredictable or random it has seldom to do with the lack of rules or algorithms. The point is our difficulty to see whether they are extremely complicated or overly simple. Therefore, nothing is done by Nature which cannot be done by a computer. Deterministic consequences of basic rules are however just as difficult to detect and understand in complex AL-simulations as in Nature.

A famous experiment in simulated biology was done by the American Chris Langton in Arizona in 1979. He was one of the first to use cellular automata programs to study environmental stability for living organisms. The program consisted of an infinite plane divided into square cells and populated at random. Cells that were isolated died off because they could not reproduce, while cells in overcrowded areas perished due to lack of resources. Survivors and multipliers were cells with a specified number of living neighbours. These basic rules allowed the production of artificial, electronic, “living” organisms made up of many cells, which interacted with each other in very complex ways. A parameter which measured the size of the area where cells were influenced by neighbours, reflected the amount of chaos in the environment. When this parameter was too high, the ensemble of organisms become unstable, fluctuated and died out. When too low, the organisms survived but did not change due to too little competition. A middle value between the two extremes was, however, an optimum place where the organisms thrived and showed a gradual evaluation resembling life on Earth.

In his artificial world built on the computer screen, Langton was seeking the simplest configuration which could reproduce itself according to the same principles as living organisms that obey biological laws. His solution was a series of what he called loops, on the screen, resembling the letter Q and consisting of a square with a tail. The loops incorporated three layers of cells where the core layer contained the data necessary for reproduction. The rules dictating the generation flow allowed a certain growth of the tail until its decided maximum was reached when certain conditions were fulfilled. It thereafter turned 90° to the left three times until it had completed a new square. When the newly formed square resembled its parent, information was passed to the offspring and the two loops separated. The internal arrangement of the cells was then changed to an exact copy of the parent and the reproduction was completed (see Figure 7.6).

An independent shape, consisting of pure information and reproducing itself, obeying deterministic rules of an artificial world, existed on the computer screen. Apparently, it was an organism consisting of patterns of computer instructions maintaining themselves through time, executing the very information of which they consisted. The process was the same as in real organisms and the question was whether it should be regarded as simulated or genuine life.

Figure 7.6 Langton’s self-reproducing loops.

Several years have passed since the basic work of Conway and Langton. Similar work has been done by many other scientists and experiments with self-organizing, computer-insect colonies have shown how local interaction creates emergent, unprogrammed behaviour (see Langton 1989). Evolution of the same kind as in the natural world clearly exists in a virtual computer world. Symbiotic processes, natural selection including predation and growing stability and order, have been detected in simulations by Danny Hillis (1985) using his famous Connection Machine.

A conclusion tells us that AL is to understand the phenomenon of life by synthesis. That is joining simple element together in order to generate lifelike behaviour in man-made systems. It said that life is not a property of matter per se, but the organization of that matter. It resides in the organization of the molecules, not in the molecules themselves.

Source: Skyttner Lars (2006), General Systems Theory: Problems, Perspectives, Practice, Wspc, 2nd Edition.